Facebook promises to "dramatically reduce" developers' access to user data

Mark Zuckerberg spoke to New York Times about the latest scandal around Facebook.

Several years ago an analytic company bought the information about 50 million Facebook users from an app developer. The company claims to have used this data for influencing political campaigns and presidential elections. You'll find the full coverage of the events in the second part of this article.

Rough times coming for app developers

Facebook's CEO said that the social network would "dramatically reduce the amount of data that developers have access to".

Facebook will "do a full forensic audit" of all apps that "have any suspicious activity".

Besides, apps that a user has not launched for three months, will be losing access to the user's data. "One of the steps we’re taking is making it so apps can no longer access data after you haven’t used them for three months.", Zuckerberg said.

Developers that want access to sensitive data for their apps will have to sign a contract with the social network and have "a real person-to-person relationship" with Facebook. As examples of sensitive data Zuckerberg named religious beliefs and sexual orientation.

What happened to Facebook and who abused what data

- A company called Cambridge Analytica (CA) harvested about 50 millions of Facebook user profiles

- They used this information to influence the results of the US presidential elections in 2016, the outcome of the “Brexit” referendum in the UK in 2016, and probably some other political campaigns in different countries

- CA bought the data for about $1 million from an American researcher named Aleksandr Kogan.

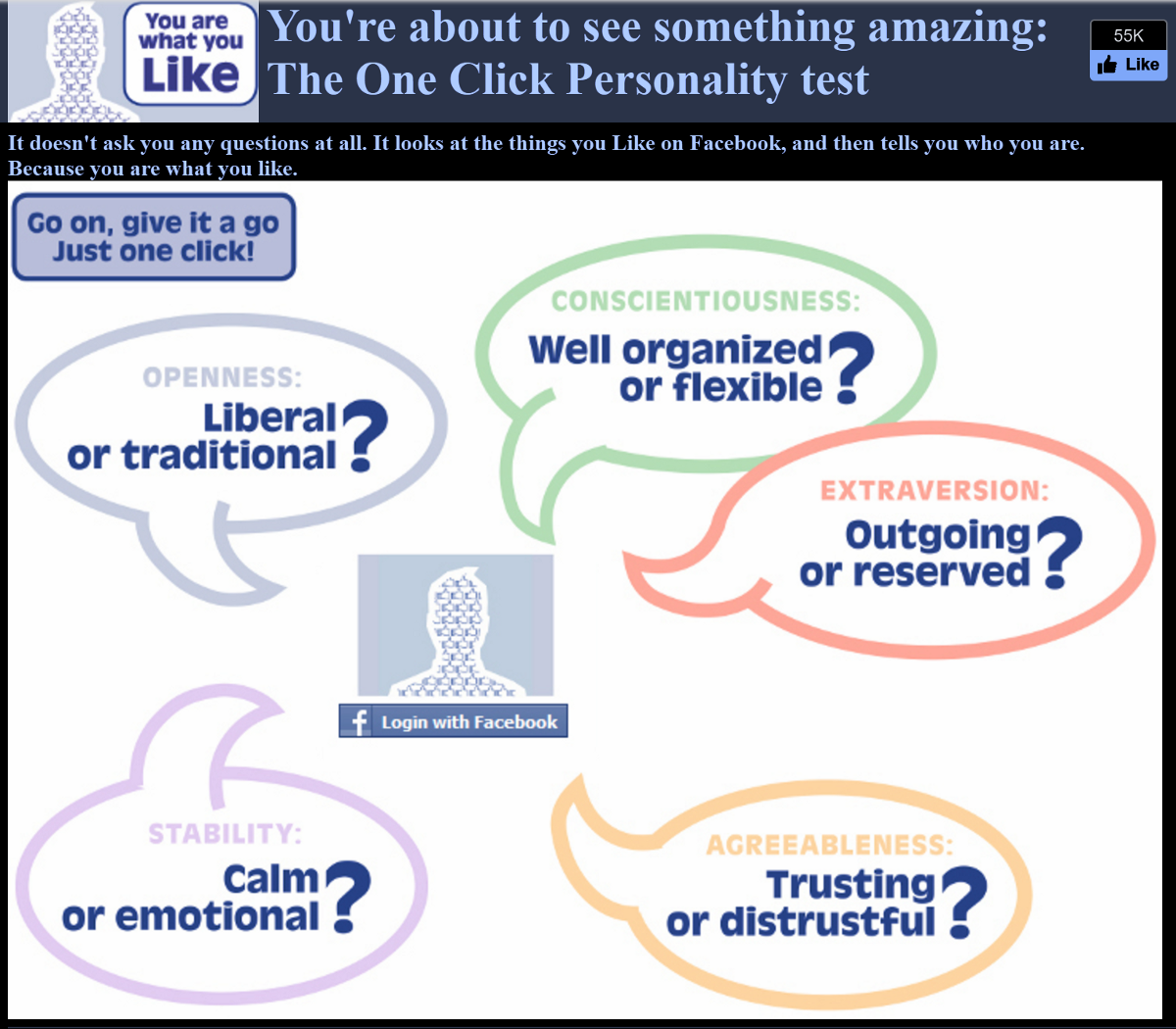

- Back in 2014, Kogan created a survey Facebook app "thisisyourdigitallife". The app accessed the basic profile information of users and their "likes". It also got data about users’ friends, and this is how 270 000 users of the app provided data about 50 million users of Facebook.

- There was nothing illegal in this activity -- Facebook lets app developers access profiles and "likes" if users agree. The app’s ToS disclosed the data collection.

- But selling this data to a third party might be a violation of Facebook rules. Facebook says that Kogan "lied to them" about the research purposes of the app.

- At a public talk in 2014 Kogan said that he had "a sample of 50+ million individuals about whom we have the capacity to predict virtually any trait."

- The activities of CA and the ways they harvest Facebook data had been explored by media before, in 2014, 2015 and 2016.

- CA worked not only for Trump but for other presidential candidates -- Ted Cruz and Ben Carson, as The Guardian found out back in 2015. Facebook then said that it would investigate the situation.

- In 2015 Facebook banned the app "thisisyourdigitallife" and demanded CA to delete user data gathered by this app.

- Only in March 2018 Facebook finally suspended CA itself from using their platform

- It happened after several large media outlets covered the recent confessions of CA’s ex-CEO Alexander Nix and found documents that supported his claims

- Among other things, Nix said "We exploited Facebook to harvest millions of people’s profiles. And built models to exploit what we knew about them and target their inner demons. That was the basis the entire company was built on."

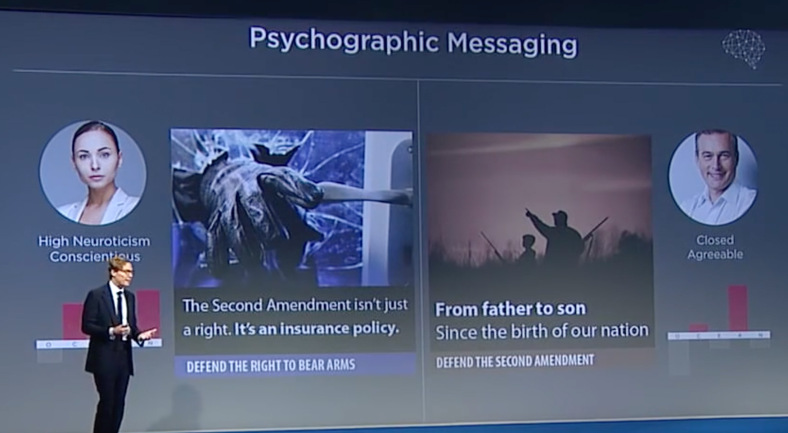

- Back in 2016, Nix has already said that his company built a model based on "hundreds and hundreds of thousands of Americans", a "model to predict the personality of every single adult in the United States of America." They called it "psychographic modeling techniques."

- It is not clear whether CA’s models and techniques actually work for predicting people’s behavior or manipulating it. Scientific research provides contradictory results.

- Cambridge Analytica’s parent company, SCL, has created psychological profiles of 230 million Americans and works with Pentagon.

- By Tuesday, Facebook has lost $60 billion of its stock market value. Predictions of a possible increase in social media regulation hurt other companies. Shares of Snap Inc fell 2.5% and Twitter Inc fell more than 10%.

- Facebook faces an FTC investigation, possible fines and lawsuits

- A hashtag #deletefacebook is popular on social media. Brian Acton, the founder of WhatsApp Messenger, who had sold it to Facebook, also joined the campaign and posted a tweet with this hashtag.

- A Facebook spokesperson said: "The entire company is outraged we were deceived. We are committed to vigorously enforcing our policies to protect people’s information and will take whatever steps are required to see that this happens."

- There is a conflict inside Facebook. The company’s present and former employees express different opinions on how the social network should react to the situation in question and manage user data in general.

Do "likes" matter?

One of the most intriguing aspects of this situation is whether your likes on Facebook really help study you and influence your choices. "Likes" under the question are not your reactions to posts and comments, they are pages, companies, persons, books, shows, and so on, that you've added as "liked" to your Facebook page.

The power of likes is a complicated issue, so we'll just quote two articles here.

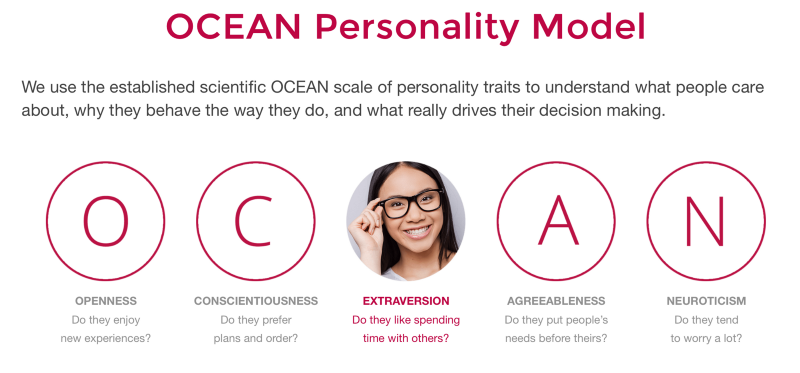

Cambridge Analytica has marketed itself as classifying voters using five personality traits known as OCEAN — Openness, Conscientiousness, Extroversion, Agreeableness, and Neuroticism — the same model used by University of Cambridge researchers for in-house, non-commercial research. The question of whether OCEAN made a difference in the presidential election remains unanswered

Cambridge Analytica has claimed that OCEAN scores can be used to drive voter and consumer behavior through "microtargeting," meaning narrowly tailored messages. Nix has said that neurotic voters tend to be moved by “rational and fear-based” arguments, while introverted, agreeable voters are more susceptible to “tradition and habits and family and community.”

Facebook banned CA on the platform only in March 2018

[Motherboard](https://motherboard.vice.com/en_us/article/mg9vvn/how-our-likes-helped-trump-win):

[Motherboard](https://motherboard.vice.com/en_us/article/mg9vvn/how-our-likes-helped-trump-win):

Remarkably reliable deductions could be drawn from simple online actions. For example, men who "liked" the cosmetics brand MAC were slightly more likely to be gay; one of the best indicators for heterosexuality was "liking" Wu-Tang Clan. Followers of Lady Gaga were most probably extroverts, while those who "liked" philosophy tended to be introverts. While each piece of such information is too weak to produce a reliable prediction, when tens, hundreds, or thousands of individual data points are combined, the resulting predictions become really accurate.

Kosinski and his team tirelessly refined their models. In 2012, Kosinski proved that on the basis of an average of 68 Facebook "likes" by a user, it was possible to predict their skin color (with 95 percent accuracy), their sexual orientation (88 percent accuracy), and their affiliation to the Democratic or Republican party (85 percent). But it didn't stop there. Intelligence, religious affiliation, as well as alcohol, cigarette and drug use, could all be determined. From the data, it was even possible to deduce whether someone's parents were divorced.

The strength of their modeling was illustrated by how well it could predict a subject's answers. Kosinski continued to work on the models incessantly: before long, he was able to evaluate a person better than the average work colleague, merely on the basis of ten Facebook "likes." Seventy "likes" were enough to outdo what a person's friends knew, 150 what their parents knew, and 300 "likes" what their partner knew. More "likes" could even surpass what a person thought they knew about themselves.”